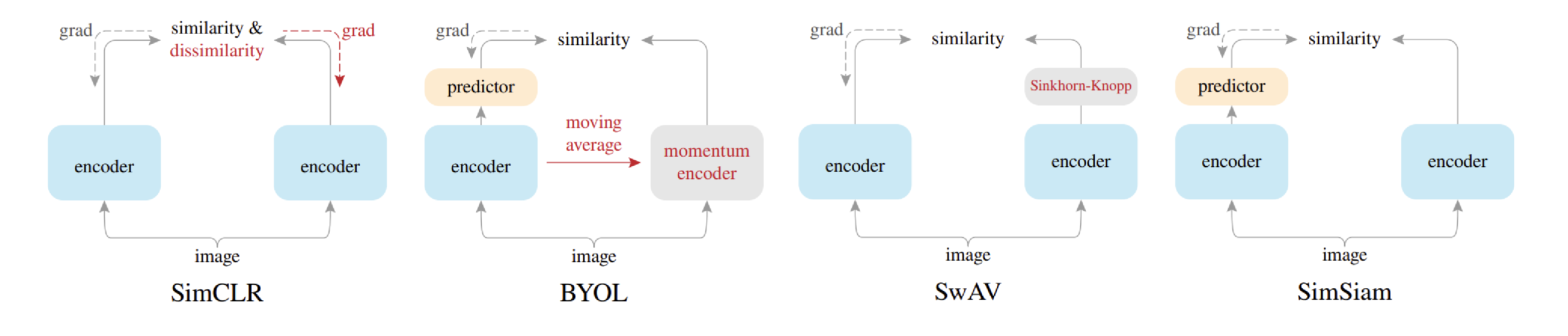

We exhaustively validate the superiority of our approaches using various models and tabular datasets. To overcome this issue, we propose a novel pseudo-labeling approach that regularizes the confidence scores based on the likelihoods of the pseudo-labels so that more reliable pseudo-labels which lie in high density regions can be obtained. Prominent SSL methods, such as Masked Language Modeling (MLM) (Devlin et al. We propose a novel high-performance and interpretable canonical deep tabular data learning architecture, TabNet. Sabine: It’s great to have you. Mateusz Opala: Hello, everyone.Happy to be here. Furthermore, existing pseudo-labeling techniques do not assure the cluster assumption when computing confidence scores of pseudo-labels generated from unlabeled data. Self-supervised learning (SSL) aimed at harnessing unlabelled data through learning its structure and invariances has accumulated a large body of works over the last few years. TabNet: Attentive Interpretable Tabular Learning. Sabine: With us today, we have Mateusz Opala, who is going to be answering questions about leveraging unlabeled image data with self-supervised learning or pseudo-labeling.Welcome, Mateusz. In this way, a vast number of training instances with supervision can be generated from the unlabeled data to train a model for the pretext task. In this paper, we revisit self-training which can be applied to any kind of algorithm including the most widely used architecture, gradient boosting decision tree, and introduce curriculum pseudo-labeling (a state-of-the-art pseudo-labeling technique in image) for a tabular domain. Self-supervised learning leverages a carefully defined pretext task for supervised feature learning where the supervision is automatically generated from the data itself. Self-supervision, where data help annotate themselves, has received relatively less attention on tabular data, that drive a large proportion of business and application domains. (a) In self-supervised learning, the mask and feature vector estimators try to recover the original unlabeled feature 1 7 4 3 5 1 and the mask vector 0 0 1 0 from its masked and corrupted variant 1 7 6 3 2 1. most of the existing methods require appropriate tabular datasets and architectures). Figure 2: The proposed self- and semi-supervised learning frameworks on exemplary tabular data. Discover TensorFlows flexible ecosystem of tools, libraries and community resources.

Although it has been successful in various data, there is no dominant semi- and self-supervised learning method that can be generalized for tabular data (i.e. An end-to-end open source machine learning platform for everyone. Download a PDF of the paper titled Revisiting Self-Training with Regularized Pseudo-Labeling for Tabular Data, by Minwook Kim and 2 other authors Download PDF Abstract:Recent progress in semi- and self-supervised learning has caused a rift in the long-held belief about the need for an enormous amount of labeled data for machine learning and the irrelevancy of unlabeled data. Self-supervised learning has been shown to be very effective in learning useful representations, and yet much of the success is achieved in data types such.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed